There has been much talk about how Barack Obama has leveraged the Internet to publicize his candidacy and to mobilize voters. Obama's ambitious technological plan for the US has also been discussed on many blogs (read here here).

But one of the things that has caught my attention the most and that few people have noticed: the change that the Robots.txt from the White House website, very much in line with what Obama preaches.

What is a Robots.txt?

It is a text file that contains instructions on which pages of a web page can and cannot be visited by robots. In other words, it indicates which parts of the web page should not be scanned by robots.

Typically, this is content that appears on the website, but you only want it to be accessible to people browsing the website; you do not want this content to appear indexed in search engines. It is also used when a content manager generates duplicate content and is therefore penalized by search engines.

This file is created by following the instructions that can be found here: Robots, and all the robots that follow the “Robot Exclusion Protocol“ undertake to comply with these instructions.

If a web page does not have this text file created, the robots understand that they can index it (although having searched the robots.txt of that page the robots generate a 404 error and therefore, it is recommended that a blank page be created and uploaded via FTP with the name Robots.txt so that in this way, the 404s generated on the page will be real and can be debugged by the webmaster).

Back to the White House Robots.txt

Until a few days ago, when I was explaining in class what a file is Robots.txt and what is the “Protocol Robot Exclusion”He gave several examples to illustrate the different types of Robots.txt that we can create to give instructions to indexing robots:

- A blank robots.txt page

- A robots.txt page with more or less “normal” instructions

- A totally overblown and out of place robots.txt page.

Well… Obama has “sabotaged” examples and my example of bad practice regarding Robots.txt has been uploaded: The webmaster of the new White House website has created a new Robots.txt that is perfectly executed, clear and concise.

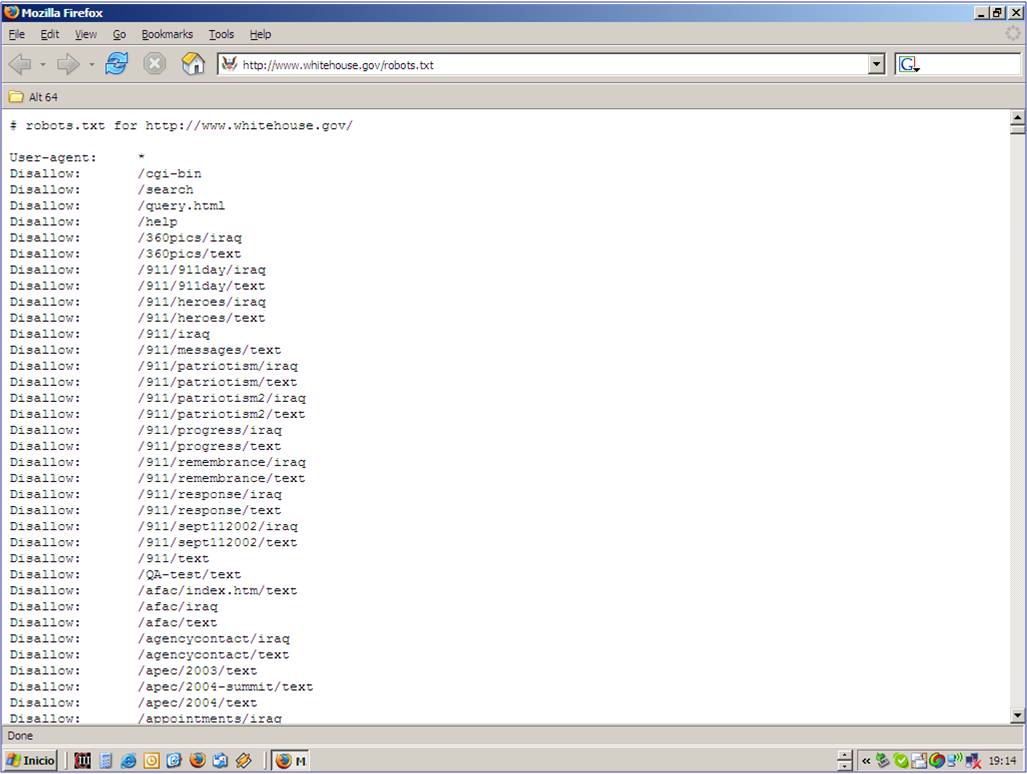

George Bush Jr.'s webmaster had created a Robots.txt with thousands and thousands of pages that were forbidden to robots. Needless to say, there was nothing interesting in that content (I had once read things they didn't want indexed... photos of the first lady, speeches, etc.). But it did show that the White House had a somewhat archaic concept of what the Internet is and about publishing content.

The new webmaster, in this sense, shows that he has a much clearer idea of what the website of an institution like the White House should be.

Ok… but what was that Robots.txt like?

Luckily, I always include screenshots of what I explain in my class slides, just in case my internet connection fails or the place where I teach has no connection... (how sad to always have to think about this possibility).

So below these lines (at the end of the post) I include the image that I have archived and that now becomes history… (Look at the bar screenshot scroll… is the one that shows the magnitude of the list)

You can see the current robots.txt page by clicking here: Robots.txt from Casablanca with Obama .

If you want more information on how to create a Robots.txt or what it is for, you will find it here: Robots.txt and also in the Free Search Engine Optimization Course from our website: Search Engine Optimization Course